How Banana Pro AI Fits Motion Control

Performance marketing in the current digital landscape is defined by creative attrition. As audiences become increasingly desensitized to static imagery, the demand for dynamic video content has scaled beyond the capacity of traditional production pipelines. The primary friction point isn’t just generating “a video,” but generating a video that maintains brand coherence while executing specific camera behaviors and subject motions. Within this operational context, tools like Banana AI provide a bridge between raw generative power and the granular control required for professional-grade creative iteration.

The transition from static assets to motion requires a shift in how creative teams perceive the “shot.” When using Nano Banana Pro, the operator is no longer just a prompt engineer; they are a digital cinematographer balancing the physics of a virtual lens with the intent of a marketing brief. Understanding how to shape camera movement and pacing is essential for teams looking to maintain high-quality throughput without the overhead of a full-scale animation studio.

The Strategic Shift to Motion-First Workflows

For performance marketers, the goal of incorporating motion is rarely about cinematic storytelling in the traditional sense. It is about stopping the scroll and directing the eye. The use of Nano Banana Pro allows for the rapid transformation of static concepts into high-impact motion assets. However, the effectiveness of these assets depends on the operator’s ability to dictate motion rather than simply accepting whatever the model generates.

Effective motion control begins with the realization that generative video is inherently probabilistic. When you input a prompt or an initial frame into an AI Image Editor, you are setting a foundation, but the “motion weight” and directional guidance determine whether the result is a professional asset or a visual distraction. Systems like Nano Banana offer a structured environment where these variables can be tuned to match specific platform requirements—whether that is the fast-paced nature of a TikTok ad or the more polished aesthetic of a YouTube pre-roll.

Shaping Camera Movement: Precision vs. Fluidity

Camera movement is the most direct way to influence the viewer’s psychological response to a video. A slow zoom-in creates intimacy and focus, while a lateral pan suggests a reveal or a change in perspective. In Nano Banana Pro, the operator must decide how much agency to give the model regarding these movements.

A common pitfall in generative video is “camera drift,” where the perspective shifts in a way that feels unmoored or physically impossible. To counter this, operators use specific terminology in their workflow to anchor the virtual camera. By defining the axis of movement—whether it is a dolly, a crane shot, or a simple zoom—you reduce the model’s tendency to hallucinate background distortions.

It is worth noting a significant limitation here: current generative models, including Nano Banana, can sometimes struggle with complex three-dimensional spatial awareness. If a camera movement is too aggressive—such as a 360-degree orbit around an intricate object—the geometry of the subject may “melt” or lose consistency. Practical judgment suggests that for commercial assets, subtle, purposeful camera movements often yield more professional results than extreme maneuvers that push the model beyond its current spatial stability.

Subject Motion and the Preservation of Coherence

Subject motion refers to the internal movement within the frame—a model walking, a liquid pouring, or a vehicle driving. In the context of Banana Pro, controlling subject motion is arguably more difficult than controlling the camera because it requires the AI to understand the structural integrity of the object it is animating.

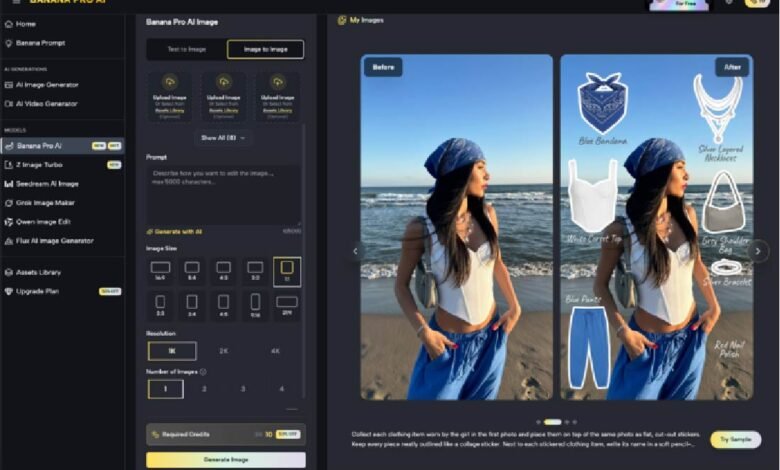

When a performance marketer needs to showcase a product, the product must remain recognizable. If the motion is too high, a sneaker might morph into a different shoe mid-stride. Using the Nano Banana framework, operators often find success by starting with a high-quality base image from an AI Image Editor and then applying conservative motion settings. This “Image-to-Video” approach ensures that the primary subject remains locked in its visual identity while only specific elements, like hair or clothing, are allowed to react to the virtual environment.

There is an inherent uncertainty in how models interpret fluid dynamics or complex human gestures. A hand wave might result in extra fingers, or a splash of water might move in a way that defies gravity. In these instances, the operator’s role is to iterate. Instead of seeking a perfect “one-shot” generation, the workflow should account for a 20-30% “failure rate” where the motion doesn’t align with physical reality. This expectation-reset is vital for commercial planning; timelines must allow for “re-rolls” to ensure the final output meets brand standards.

Pacing and Timing for High-Conversion Assets

Pacing is the rhythmic structure of the motion. In performance marketing, the first three seconds are the most critical. If the motion is too slow to start, the viewer scrolls past. If it is too fast, the message is lost.

Nano Banana allows for the adjustment of temporal consistency, which influences how much “change” happens between frames. A higher temporal weight leads to smoother, more consistent motion, which is ideal for lifestyle content. A lower weight can create a more jittery, high-energy effect that might work for certain streetwear or tech-focused ads.

The operator must align the pacing of the video with the intended call to action (CTA). For example, if the ad is meant to convey a sense of calm and luxury, the camera movement should be a slow, steady track, and the subject motion should be minimal. Conversely, a clearance sale ad might benefit from rapid zooms and energetic subject movement. Banana AI provides the tools to toggle these moods, but the commercial awareness must come from the human lead.

Integrating Banana Pro into the Asset Pipeline

For a content team, the goal is to move from a “experimental” use of AI to a “production-ready” pipeline. This requires a systematic approach to asset creation. A typical workflow using the Banana Pro ecosystem might look like this:

- Concept and Static Generation: Use the AI Image Editor to create the “hero” frame. This ensures the lighting, composition, and branding are perfected before motion is even considered.

- Motion Mapping: Decide on the primary motion objective. Is it a camera move or a subject move?

- Nano Banana Implementation: Upload the hero frame and apply motion parameters. Start with a medium motion weight to test the model’s interpretation of the scene.

- Refinement: If the motion is coherent but the pacing is off, adjust the frame rate or temporal settings. If the subject is morphing, reduce the motion weight.

- Post-Production: Generative video is rarely the final step. Operators should expect to bring these clips into a traditional editor for color grading, text overlays, and sound design.

This systems-minded approach treats Nano Banana as a specialized tool within a larger kit. It recognizes that while the AI does the heavy lifting of frame interpolation, the human operator provides the strategic guardrails.

Navigating Technical Limitations and Operational Reality

No discussion of generative motion control is complete without a grounded assessment of its limitations. While Nano Banana Pro offers significant advancements in control, it is not a “magic button” for perfect cinematography.

One major limitation is “temporal flickering,” where lighting or textures change slightly from one frame to the next. While modern updates have minimized this, it remains a factor that operators must account for, particularly in high-contrast scenes. Additionally, the lack of precise “keyframing”—the ability to say exactly where an object should be at second four—means that operators are guiding a process rather than commanding it.

Furthermore, there is a ceiling on the duration of high-coherence clips. Generating a 3-5 second clip with perfect stability is relatively straightforward; maintaining that same level of detail over 15-30 seconds remains a challenge for most generative models. Performance marketers typically solve this by generating multiple short, high-quality “bursts” and stitching them together in post-production, rather than attempting to generate a single long-form video in one pass.

The Commercial Logic of AI Motion Control

The adoption of Banana AI and its associated tools is driven by commercial necessity. In an era where creative testing is the primary lever for ad performance, the ability to produce 50 variations of a video ad in the time it used to take to produce one is a massive competitive advantage.

However, the value isn’t just in the speed; it’s in the ability to pivot. If data shows that “slow zoom” creative is outperforming “fast pan” creative, an operator using Nano Banana can update the entire campaign’s visual language in an afternoon. This level of agility was previously impossible for smaller teams or those without massive production budgets.

Ultimately, shaping camera movement and subject motion is about reducing the distance between an idea and its execution. By mastering the controls within the Banana Pro interface, marketers can ensure that their motion assets are not just “AI-generated,” but are strategically crafted to drive engagement.

A Practical Outlook on Creative Evolution

As we look at the trajectory of tools like Banana AI, the focus is clearly moving toward more granular control. We are moving away from a world of “surprising” results toward a world of “intended” results. For the performance marketer, this means that the skill set required is shifting from prompt writing to creative direction.

The successful operator is one who understands the physics of a camera, the importance of subject stability, and the psychological impact of pacing. They use Nano Banana Pro not as a shortcut, but as a high-velocity production studio. While the technology will continue to evolve and current limitations like spatial warping or flickering will likely diminish, the need for a commercially aware operator to shape that motion will remain constant.

By prioritizing workflow over hype and focusing on the practical application of motion control, creative teams can leverage these tools to build sustainable, high-performance asset pipelines that meet the demands of modern digital advertising. Robust reasoning suggests that the most successful teams will be those who embrace the iterative nature of the technology, acknowledging its current flaws while capitalizing on its unprecedented speed and flexibility.